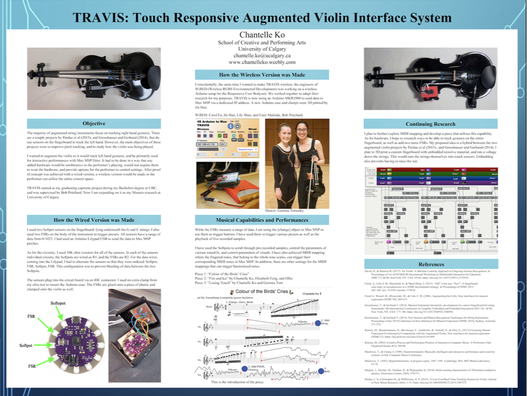

There are two versions of TRAVIS, I and II. TRAVIS I uses two Softpot sensors on the fingerboard to control sound fx, and two FSR's to bang presets. The data is sent to Max MSP/Jitter patches to control audio and video. My wired prototype, uses an Arduino Lilypad USB microcrontroller. It was made by myself, and under the supervision of Dr. Bob Pritchard.

The wireless version uses an Arduino MKR1000. There was a group of engineering students who collaborated with SUBCLASS. Their names are Jin Han, Esther Mutinda, Carol Fu, and Lily Shao. For their own capstone they are utilized the same MKR1000 for the RUBS (Responsive User Bodysuit). They named their capstone, WiRED (Wireless RUBS Environment Development). I have been modifying their work for my own purposes, as well as collaborating by making pieces with RUBS. Dr. Bob Pritchard continues the RUBS project with TASTE.

TRAVIS II is was made in collaboration with Lora Oehlberg and luthier, Aaron Pratte. It has four touch sensors made from conductive 3D print PLA and a voltage running down the strings. It also has four round FSRs clamped to the body. It uses the same Arduino MKR1000 as TRAVIS I, but it has been multiplexed for 8 analog sensors. It was presented at NIME 2020 and published in the Computer Music Journal.

Videos of performances, demos are here.

I post the latest updates for my research on my TRAVIS blog, here.

I have gone through many troubleshooting step with the wireless version. I have summarized my problems and solutions here.

The wireless version uses an Arduino MKR1000. There was a group of engineering students who collaborated with SUBCLASS. Their names are Jin Han, Esther Mutinda, Carol Fu, and Lily Shao. For their own capstone they are utilized the same MKR1000 for the RUBS (Responsive User Bodysuit). They named their capstone, WiRED (Wireless RUBS Environment Development). I have been modifying their work for my own purposes, as well as collaborating by making pieces with RUBS. Dr. Bob Pritchard continues the RUBS project with TASTE.

TRAVIS II is was made in collaboration with Lora Oehlberg and luthier, Aaron Pratte. It has four touch sensors made from conductive 3D print PLA and a voltage running down the strings. It also has four round FSRs clamped to the body. It uses the same Arduino MKR1000 as TRAVIS I, but it has been multiplexed for 8 analog sensors. It was presented at NIME 2020 and published in the Computer Music Journal.

Videos of performances, demos are here.

I post the latest updates for my research on my TRAVIS blog, here.

I have gone through many troubleshooting step with the wireless version. I have summarized my problems and solutions here.

|

TRAVIS II: 4 string touch sensors, 4 FSRs, and Wireless

|

TRAVIS II: electronics are unplugged so the violin can dually be used in traditional contexts

|

Events and Publications

Chantelle Ko, Lora Oehlberg; Construction and Performance Applications of an Augmented Violin: TRAVIS II. Computer Music Journal 2021; 44 (2-3): 55–68. doi: https://doi.org/10.1162/comj_a_00563

Paper presentation, Touch Responsive Augmented Violin Interface System II: Integrating Sensors into a 3D Printed Fingerboard. NIME 2020 (New Interfaces for Musical Expression). https://nime2020.bcu.ac.uk/#top

https://www.nime.org/proceedings/2020/nime2020_paper32.pdf

Performing an improvisation with TRAVIS II in Ned Rush's "More Kicks than Friends" micro festival for charity. May 2nd, 2020 https://www.youtube.com/watch?v=uV1WqxwneBs

Kindred Dichotomy, a duet for both TRAVIS I and II, performed at AWMAS 2020 (Alliance of Women in Media Arts and Science): https://awmas2020.wixsite.com/home

Crossing Boundaries at IAST 2018 (Interactive Art, Science, and Technology in Western Canada): Poster presentation at the University of Lethbridge on Oct 27th, 2018. http://iast.ca/crossing-boundaries/

Graduate Colloquium at the University of Calgary. Sept 28th, 2018 @ 2pm; Doolittle Studio.

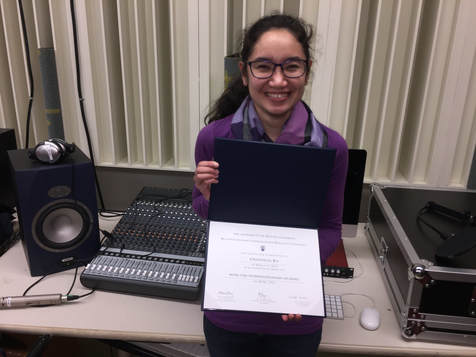

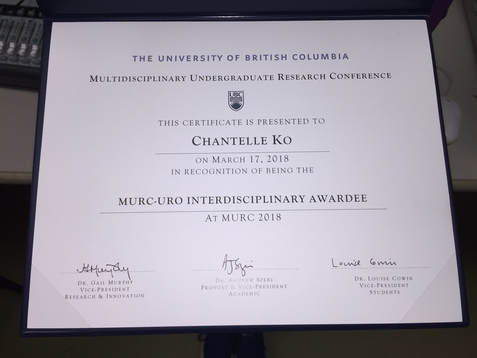

MURC 2018 at UBC. There I received the MURC - URO Interdisciplinary Award.

MURC: https://students.ubc.ca/career/career-events/multidisciplinary-undergraduate-research-conference

MURC 2018 Program: https://students.ubc.ca/sites/students.ubc.ca/files/MURC%202018%20Program%20Guide_4.pdf

ICICS News: http://icics.ubc.ca/news-events/

UBC School of Music Recognition:

https://music.ubc.ca/blog/2018/4/5/catching-up-with-our-students-spring-2018

https://www.facebook.com/UBC.School.of.Music/videos/1808104445869305/

https://music.ubc.ca/hot-sheet/2018/2/13/hot-sheet-for-the-week-of-feb-13-2018

https://music.ubc.ca/blog/2019/3/25/subclass-story?fbclid=IwAR02wuHpCxVICXp8ADtcFG5serpOdLu4AelP2fZnd6AdU8lOL-38z9uKHFI

Paper presentation, Touch Responsive Augmented Violin Interface System II: Integrating Sensors into a 3D Printed Fingerboard. NIME 2020 (New Interfaces for Musical Expression). https://nime2020.bcu.ac.uk/#top

https://www.nime.org/proceedings/2020/nime2020_paper32.pdf

Performing an improvisation with TRAVIS II in Ned Rush's "More Kicks than Friends" micro festival for charity. May 2nd, 2020 https://www.youtube.com/watch?v=uV1WqxwneBs

Kindred Dichotomy, a duet for both TRAVIS I and II, performed at AWMAS 2020 (Alliance of Women in Media Arts and Science): https://awmas2020.wixsite.com/home

Crossing Boundaries at IAST 2018 (Interactive Art, Science, and Technology in Western Canada): Poster presentation at the University of Lethbridge on Oct 27th, 2018. http://iast.ca/crossing-boundaries/

Graduate Colloquium at the University of Calgary. Sept 28th, 2018 @ 2pm; Doolittle Studio.

MURC 2018 at UBC. There I received the MURC - URO Interdisciplinary Award.

MURC: https://students.ubc.ca/career/career-events/multidisciplinary-undergraduate-research-conference

MURC 2018 Program: https://students.ubc.ca/sites/students.ubc.ca/files/MURC%202018%20Program%20Guide_4.pdf

ICICS News: http://icics.ubc.ca/news-events/

UBC School of Music Recognition:

https://music.ubc.ca/blog/2018/4/5/catching-up-with-our-students-spring-2018

https://www.facebook.com/UBC.School.of.Music/videos/1808104445869305/

https://music.ubc.ca/hot-sheet/2018/2/13/hot-sheet-for-the-week-of-feb-13-2018

https://music.ubc.ca/blog/2019/3/25/subclass-story?fbclid=IwAR02wuHpCxVICXp8ADtcFG5serpOdLu4AelP2fZnd6AdU8lOL-38z9uKHFI

TRAVIS II Objectives

All augmented violins share something in common, and it's not just that they are based on the violin. The creators all have clear goals they want to achieve, problems they want to solve, and priorities of one goal over another. These goals guide the construction of the instrument. Therefore, in terms of hardware, there usually is a compromise that needs to be met between the different problems that could arise. For example, there are various projects that augment the bow by adding electronics to the frog, such as the K-bow, or Dianne Young's hyperbow, and this added weight to the bow shifts the balance point. The tradeoff is sacrificing the bows natural weight for the electronics. Mari Kimura is strongly against the use of foot pedals, but that means her glove system may not match the musical style/preferences of another player who does like having an explicit trigger mechanism. In Laurel Pardue et a's. touch sensored system, they prioritized the low cost of the electronics, and being able to remove all electronics from the violin, and therefore their system may not be as robust as it could have been if it was made with other electronic materials. Tobias Grosshauser and Troester Gerhand's sensor fingerboard is more robust and can sense linearly, as well as pressure. However, their fingerboard is permanent, so for obvious aesthetic reasons the violin could not be additionally used for "regular" playing in a traditional orchestra.

When considering my options for how I moved forward in making an improved system, my distinct objectives were:

When considering my options for how I moved forward in making an improved system, my distinct objectives were:

- Get a better violin. TRAVIS I is a cheap, painted, Mendini violin. In terms of sound quality, I've actually tried out worse violins in the past, so I don't mind the Mendini. For the purposes of amplifying and processing the sound, it's actually better that the natural violin is a bit quieter so that it does not overpower the sound coming through the speakers. However, it is a bit of an adjustment for my ears when switching between it, and my high quality violin. It would be nice to have a violin where the tone quality is not so strikingly different.

- Add more FSRs in order to alleviate compositional restrictions for triggers and presets.

- Remain wireless, the added electronics are as light as possible, and the electronics are all clamped onto the violin. I like being able to move about the stage, and being able to put the instrument down without having to unclip anything from my body.

- Be able to sense the fingerboard/strings linearly on all four strings, and the entire length of the fingerboard.

- Do so in a way that does not require to raise the strings, like in Pardue's work. I have read in an article about how raising the strings can result in injury here. I also have personal experience, and have heard accounts of my peer's experiences, with playing on instruments where the string height was not properly set. If it does not cause injury, at the very least it is very uncomfortable.

- I am not interested in pressure sensing the fingerboard. Grosshauser's and Gerhand's instrument does pressure sense because they were using it to study when violinists start to feel tired, and if they have the bad habit of pressing too hard when playing. This is an incredibly wonderful tool. I can imagine Alexander teachers will want to use it some day. However, I am primarily interested in using my instrument as a controller for composition. Good violin playing technique requires the fingers to be as light as possible at all times in order to play as quickly as possible, shift smoothly, reduce overall tension in the hand, and prevent injury. It would be unnatural to ask a violinist to intentionally play with varying finger pressure, in order for that type of sensing capability to be used to control sound. I would never perform this way, and I would never ask it of another violinist. TRAVIS I and II do have some pressure sensitivity, but I am not actively using the change in pressure as a control gesture.

- Whatever is used on the fingerboard will not wear down from the abrasion from the strings, like in Tod Machover's hypercello project. However, there is also have the capability to replace the material in case if it looses it's conductive/resistive properties over time.

- If all electronics cannot be removed from the violin, what electronic parts that remain aesthetically blends in and at least appears as if the violin is "regular". This will allow the violin to still be used in traditional settings. This also makes it incredibly easier for travel and flying so I don't have to worry about carrying two violins. It'll save my back too.

How I Achieved My Objectives

- Got a better quality violin.

- Worked with a luthier to replace the fingerboard

- Multiplexed the arduino so that more sensors can be added, a total of 8.

- 3D print a fingerboard out of black PLA. There are tracks that run underneath the strings. Conductive 3D print strips fit into these tracks, and are flush with the natural surface of the fingerboard. The strips can slide out of these tracks if they need to be replaced. A voltage runs down the strings, so like in Pardue's work, the strings themselves become touch sensors.

- 3d printed the arduino case and other clamps

- Used a JST connector to connect the strings

References

Instead of organizing my references in the typical alphabetical order, I have ordered them in categories. That way it's easier for me to refer back. Categories are: Projects that Include Touch Sensors, 3D Printing, and Other. Within the "Other" category are projects that augment the violin in a completely different way, or articles that talk about general creative processes in interactive composition. Even though I'm not interested in tracking the right hand, I think it's important to know what has been done in the general history of augmented violins so I don't rediscover hot water. Also, some programming/software ideas that have been used for right hand tracking can be re-purposed and re-mapped for the left.

Projects that Included Touch Sensors (or attempted to at some point in the process)

Pardue, L. S., Christopher H., & McPherson, A. P. (2015). A Low-Cost Real-Time Tracking System for Violin. Journal of New Music Research, 44, (no.

4), 1-19. https://doi.org/10.1080/09298215.2015.1087575

Grosshauser, T., & Gerhand T. (2014). Musical instrument interaction: development of a sensor fingerboard for string instruments. 8th

International Conference on Tangible, Embedded and Embodied Interaction (TEI '14). ACM, New York, NY, USA: 177-

180. https://doi.org/10.1145/2540930.2540956

Grosshauser, T., & Gerhand T. (2013). Finger Position and Pressure Sensing Techniques for String and Keyboard Instruments. Proceedings of the

International Conference on New Interfaces for Musical Expression, 479-484. doi: 10.5281/zenodo.1178538

Grosshauser, T., Großekathoefer U., & Hermann, T. (2010). New Sensors and Pattern Recognition Techniques for String Instruments. Proceedings

of the 2010 Conference on New Interfaces for Musical Expression (NIME 2010). 271-276. doi: 10.5281/zenodo.1177778

Grosshauser, T. (2008). Low force pressure measurement: Pressure sensor matrices for gesture analysis, stiffness recognition and augmented

instruments. In Proceedings of the International Conference on New Interfaces for Musical Expression (NIME08). 97-202.doi: 10.5281/zenodo.1179550

Bahn, C. & Trueman, D. (2001). Interface: Electronic Chamber Ensemble. In Proceedings of the CHI'01 Workshop on New Interfaces for Musical

Expression (NIME01), Seattle, WA. 19-23.

Freed A., Wessel, D., Zbyszynski, M., & Uitti, F. M. (2006). Augmenting the Cello. New interfaces for musical expression (NIME '06), 409-413.

Freed, A., Uitti, F.M., Mansfield, S., & MacCallum, J. (2013). “Old” is the new “New”: A fingerboard case study in recrudescence as a NIME

development strategy. In Proceedings of NIME 2013. 442–445. doi: 10.5281/zenodo.1178524

Machover, T., & Chung, J. (1989). Hyperinstruments: Musically intelligent and interactive performance and creativity systems. In Intl. Computer

Music Conference. 186-190.

Machover, T. (1992). Hyperinstruments: A progress report, 1987–1991. Cambridge, MA: MIT Media Laboratory, 53-78. https://www.media.mit.edu/publications/hyperinstruments-a-progress-report-1987-1991/

3D Printing

Mcghee, J., Sinclair, M., Southee, D., & Wijayantha, K. (2018). Strain sensing characteristics of 3D-printed conductive plastics. Electronics

Letters, 54(9), 570-571.

Leigh S.J., Bradley R.J., Purssell C.P., Billson D.R., & Hutchins D.A. (2012). A Simple, Low-Cost Conductive Composite Material for 3D Printing of

ElectronicSensors. PLoS ONE. 7(11). doi:10.1371/journal.pone.0049365unmodified

Form Labs: Designing a 3D Printed Acoustic Violin. Retrieved from: https://formlabs.com/blog/designing-a-3d-printed-acoustic-violin/

Openfab PDX: An open source FFF 3D printed electric violin. Retrieved from: https://openfabpdx.com/fffiddle/

Other

Pardue, L. S., Buys, K., Edinger, M. I., McPherson, A., & Overholt, D. 2019. Separating Sound from Source: Sonic transformation of the violin through electrodynamic pickups and acoustic actuation. In Proceedings of the 2019 Conference on New Interfaces for Musical Expression (NIME19). Porto Alegre, Brazil. 278–283.

Thorn, S. 2019. Transference: A Hybrid Computational System for Improvised Violin Performance. In Proceedings of the 13th International Conference on Tangible, Embedded and Embodied Interaction (TEI'19). Tempe, AZ, USA. 541-546.

Overholt, D. 2011. Violin-Related HCI: A taxonomy elicited by the musical interface technology design space. In Arts and Technology: Second International Conference (ArtsIT) 2011, Esbjerg, Denmark, 80-89.

Overholt, D. The Overtone Violin. (2005). Proceedings of the 2005 International Conference on New Interfaces for Musical Expression (NIME05). 34-37.

Dalmazzo, D., & Ramirez R. (2017). Air Violin: A Machine Learning Approach to Fingering Gesture Recognition. In Proceedings of 1st ACM SIGCHI

International Workshop on Multimodal Interaction for Education (MIE’17).ACM, NewYork, NY, USA. 63-

66. https://doi.org/10.1145/3139513.3139526

Baschet, F. 2013. Instrumental Gesture in StreicherKreis. Contemporary Music Review. 32, 17-28.

Bevilacqua, F., Rasamimanana, N., Fléty, E., Lemouton, S., & Baschet, F. (2006). The Augmented Violin Project: Research, Composition And

Performance Report. Proceedings of the 2006 International Conference on New Interfaces for Musical Expression (NIME06), Paris, France. 402-406.

Bevilacqua, F., Baschet, F., & Lemouton, S. (2012) The Augmented String Quartet: Experiments and Gesture Following, Journal of New Music

Research, 41(1), 103-119, doi: 10.1080/09298215.2011.647823

Dalmazzo, D., & Ramirez R. 2017. Air Violin: A Machine Learning Approach to Fingering Gesture Recognition. In Proceedings of ACM SIGCHI International Workshop on Multimodal Interaction for Education (MIE’17). ACM Press, NewYork, NY. 63-66.

Kimura, M., Rasamimanana, N., Bevilacqua, F., Zamborlin, B., Schnell, N., & Fléty, E. (2012). Extracting Human Expression For Interactive

Composition with the Augmented Violin. New interfaces for musical expression (NIME'12). https://hal.archives-ouvertes.fr/hal-01161009

Kimura, M. (2003). Creative Process and Performance Practice of Interactive Computer Music: A Performers Tale. Organised Sound 8, 3, 289-96.

Baschet, F. (2013). Instrumental Gesture in StreicherKreis. Contemporary Music Review. 32, 17-28.

Guettler, Knut & Wilmers, Hans & Johnson, Victoria. (2008). Victoria Counts -- a Case Study With Electronic Violin Bow. Proceedings of the 2008 International Computer Music Conference. 569-662.

Jensenius, A., & Johnson, V. (2012). Performing the Electric Violin in a Sonic Space. Computer Music Journal, 36(4), 28-39.

Schnell, N., Röbel, A., Schwarz, D., Peeters, G., & Borghesi, R. (2009). MuBu & Friends –Assembling Tools for Content Based Real-Time Interactive

Audio Processing in Max/MSP. Proceedings of the 2009 International Computer Music Conference (ICMC).

Bresson, J., & Chadabe, J. (2017). Interactive Composition: New Steps in Computer Music Research. Journal of New Music Research, 46(1), 1-2.

McMillen, K.A. (2008). Stage-Worthy Sensor Bows For Stringed Instruments. Proceedings of the 2008 Conference on New Interfaces for Musical

Expression (NIME08). 347-348.

Schoonderwaldt, E., & Demoucron, M. (2009). Extraction of bowing parameters from violin performance combining motion capture and

sensors. The Journal of the Acoustical Society of America. 2695–2708. doi: 10.1121/1.3227640

Young, D. (2002). The Hyperbow: A Precision Violin Interface. International Computer Music Association. 489-492. http://hdl.handle.net/2027/spo.bbp2372.2002.100

Miranda, E., & Wanderley, M. 2006. New digital musical instruments : Control and interaction beyond the keyboard (Computer music and digital audio series ; v. 21). Middleton, Wis.: A-R Editions.

Projects that Included Touch Sensors (or attempted to at some point in the process)

Pardue, L. S., Christopher H., & McPherson, A. P. (2015). A Low-Cost Real-Time Tracking System for Violin. Journal of New Music Research, 44, (no.

4), 1-19. https://doi.org/10.1080/09298215.2015.1087575

Grosshauser, T., & Gerhand T. (2014). Musical instrument interaction: development of a sensor fingerboard for string instruments. 8th

International Conference on Tangible, Embedded and Embodied Interaction (TEI '14). ACM, New York, NY, USA: 177-

180. https://doi.org/10.1145/2540930.2540956

Grosshauser, T., & Gerhand T. (2013). Finger Position and Pressure Sensing Techniques for String and Keyboard Instruments. Proceedings of the

International Conference on New Interfaces for Musical Expression, 479-484. doi: 10.5281/zenodo.1178538

Grosshauser, T., Großekathoefer U., & Hermann, T. (2010). New Sensors and Pattern Recognition Techniques for String Instruments. Proceedings

of the 2010 Conference on New Interfaces for Musical Expression (NIME 2010). 271-276. doi: 10.5281/zenodo.1177778

Grosshauser, T. (2008). Low force pressure measurement: Pressure sensor matrices for gesture analysis, stiffness recognition and augmented

instruments. In Proceedings of the International Conference on New Interfaces for Musical Expression (NIME08). 97-202.doi: 10.5281/zenodo.1179550

Bahn, C. & Trueman, D. (2001). Interface: Electronic Chamber Ensemble. In Proceedings of the CHI'01 Workshop on New Interfaces for Musical

Expression (NIME01), Seattle, WA. 19-23.

Freed A., Wessel, D., Zbyszynski, M., & Uitti, F. M. (2006). Augmenting the Cello. New interfaces for musical expression (NIME '06), 409-413.

Freed, A., Uitti, F.M., Mansfield, S., & MacCallum, J. (2013). “Old” is the new “New”: A fingerboard case study in recrudescence as a NIME

development strategy. In Proceedings of NIME 2013. 442–445. doi: 10.5281/zenodo.1178524

Machover, T., & Chung, J. (1989). Hyperinstruments: Musically intelligent and interactive performance and creativity systems. In Intl. Computer

Music Conference. 186-190.

Machover, T. (1992). Hyperinstruments: A progress report, 1987–1991. Cambridge, MA: MIT Media Laboratory, 53-78. https://www.media.mit.edu/publications/hyperinstruments-a-progress-report-1987-1991/

3D Printing

Mcghee, J., Sinclair, M., Southee, D., & Wijayantha, K. (2018). Strain sensing characteristics of 3D-printed conductive plastics. Electronics

Letters, 54(9), 570-571.

Leigh S.J., Bradley R.J., Purssell C.P., Billson D.R., & Hutchins D.A. (2012). A Simple, Low-Cost Conductive Composite Material for 3D Printing of

ElectronicSensors. PLoS ONE. 7(11). doi:10.1371/journal.pone.0049365unmodified

Form Labs: Designing a 3D Printed Acoustic Violin. Retrieved from: https://formlabs.com/blog/designing-a-3d-printed-acoustic-violin/

Openfab PDX: An open source FFF 3D printed electric violin. Retrieved from: https://openfabpdx.com/fffiddle/

Other

Pardue, L. S., Buys, K., Edinger, M. I., McPherson, A., & Overholt, D. 2019. Separating Sound from Source: Sonic transformation of the violin through electrodynamic pickups and acoustic actuation. In Proceedings of the 2019 Conference on New Interfaces for Musical Expression (NIME19). Porto Alegre, Brazil. 278–283.

Thorn, S. 2019. Transference: A Hybrid Computational System for Improvised Violin Performance. In Proceedings of the 13th International Conference on Tangible, Embedded and Embodied Interaction (TEI'19). Tempe, AZ, USA. 541-546.

Overholt, D. 2011. Violin-Related HCI: A taxonomy elicited by the musical interface technology design space. In Arts and Technology: Second International Conference (ArtsIT) 2011, Esbjerg, Denmark, 80-89.

Overholt, D. The Overtone Violin. (2005). Proceedings of the 2005 International Conference on New Interfaces for Musical Expression (NIME05). 34-37.

Dalmazzo, D., & Ramirez R. (2017). Air Violin: A Machine Learning Approach to Fingering Gesture Recognition. In Proceedings of 1st ACM SIGCHI

International Workshop on Multimodal Interaction for Education (MIE’17).ACM, NewYork, NY, USA. 63-

66. https://doi.org/10.1145/3139513.3139526

Baschet, F. 2013. Instrumental Gesture in StreicherKreis. Contemporary Music Review. 32, 17-28.

Bevilacqua, F., Rasamimanana, N., Fléty, E., Lemouton, S., & Baschet, F. (2006). The Augmented Violin Project: Research, Composition And

Performance Report. Proceedings of the 2006 International Conference on New Interfaces for Musical Expression (NIME06), Paris, France. 402-406.

Bevilacqua, F., Baschet, F., & Lemouton, S. (2012) The Augmented String Quartet: Experiments and Gesture Following, Journal of New Music

Research, 41(1), 103-119, doi: 10.1080/09298215.2011.647823

Dalmazzo, D., & Ramirez R. 2017. Air Violin: A Machine Learning Approach to Fingering Gesture Recognition. In Proceedings of ACM SIGCHI International Workshop on Multimodal Interaction for Education (MIE’17). ACM Press, NewYork, NY. 63-66.

Kimura, M., Rasamimanana, N., Bevilacqua, F., Zamborlin, B., Schnell, N., & Fléty, E. (2012). Extracting Human Expression For Interactive

Composition with the Augmented Violin. New interfaces for musical expression (NIME'12). https://hal.archives-ouvertes.fr/hal-01161009

Kimura, M. (2003). Creative Process and Performance Practice of Interactive Computer Music: A Performers Tale. Organised Sound 8, 3, 289-96.

Baschet, F. (2013). Instrumental Gesture in StreicherKreis. Contemporary Music Review. 32, 17-28.

Guettler, Knut & Wilmers, Hans & Johnson, Victoria. (2008). Victoria Counts -- a Case Study With Electronic Violin Bow. Proceedings of the 2008 International Computer Music Conference. 569-662.

Jensenius, A., & Johnson, V. (2012). Performing the Electric Violin in a Sonic Space. Computer Music Journal, 36(4), 28-39.

Schnell, N., Röbel, A., Schwarz, D., Peeters, G., & Borghesi, R. (2009). MuBu & Friends –Assembling Tools for Content Based Real-Time Interactive

Audio Processing in Max/MSP. Proceedings of the 2009 International Computer Music Conference (ICMC).

Bresson, J., & Chadabe, J. (2017). Interactive Composition: New Steps in Computer Music Research. Journal of New Music Research, 46(1), 1-2.

McMillen, K.A. (2008). Stage-Worthy Sensor Bows For Stringed Instruments. Proceedings of the 2008 Conference on New Interfaces for Musical

Expression (NIME08). 347-348.

Schoonderwaldt, E., & Demoucron, M. (2009). Extraction of bowing parameters from violin performance combining motion capture and

sensors. The Journal of the Acoustical Society of America. 2695–2708. doi: 10.1121/1.3227640

Young, D. (2002). The Hyperbow: A Precision Violin Interface. International Computer Music Association. 489-492. http://hdl.handle.net/2027/spo.bbp2372.2002.100

Miranda, E., & Wanderley, M. 2006. New digital musical instruments : Control and interaction beyond the keyboard (Computer music and digital audio series ; v. 21). Middleton, Wis.: A-R Editions.