My other projects include independent works in electroacoustic and interactive pieces, experiments with hardware, and other demos with software. Most were made for assignments during my undergrad or masters. Others were personal projects.

Electroacoustic and Interactive Pieces

PizzicatoBackstage and Backstage 2.0Original made in 2015; Interactive Version 2016.

IndividualsTime |

"Sound of My Hands" is an improvisation for any performer. It utilizes basic gestures of the hands to control sound via Leap Motion, while also inviting the listener to focus on recordings of sounds that the hands can make. There are three sections that explores different sound processes. It was originally made for 20 channel ambisonics, but can be arranged for any number of channels. In a live performance, the hands are projected on screen and can be performed for any length of time.

2019 An interactive piece that is controlled with TouchOSC. It was my first time experimenting with Max 8's [mc] objects and the [jit.graph] object. This was a course assignment from late 2018. It is best to listen with headphones.

“Backstage” attempts to mimic the sounds often found at highland dance competitions, and it imposes those sounds into a formal musical structure. It is driven by rhythms from the Jig and Sailor’s Hornpipe, which are in compound meter and simple meter respectively. The sections of the piece are marked by the breaks from these dances. To enhance the atmosphere, other sounds include sandpapering dance shoes, unraveling medical tape, announcements, and common chat.

Since the original "Backstage" was an electroacoustic piece, I wanted to experiment with making it more interactive. I started with making the rhythm a bedtrack, and used colour tracking to start/stop and playback live recordings of my violin. Colour tracking was also banging the individual samples of talking, sandpapering shoes, and other sounds. The same colour tracking threshold that banged the samples also changed the image being displayed. I used pitch tracking to affect the hue of the images. I also used the [bonk~] object to bang extra layers from the bedtrack rhythm when I clapped. They also gradually sped up, which I found fitting because in Highland Dance, when the dancer claps it signals the quicktime. I originally wanted to make a Scottish/Celtic sounding drum piece. Of course, I don't have actual drums around, so I used chopsticks against a cooking pot, a metal heater, bicycle wheels, a glass lamp, and I knocked on a wall. I had planned rhythm cycles for each "drum", with interlocking patterns and it's held together by a groove. But when listening to the final mix, it sounds more like individual parts layered over each other.

2017 At the tail end of 2019, I looked through my portfolio and listened to my past work. I found that I now like it better without the violin. So I decided to do a re-upload without it. I'm not going to take down the old version because I think it's nice to be able to go back and compare. Time is about contrasts and the slow development of short ideas that run into a dissonant, familiar, taunting motive. The main contrast is predetermined by a squeaky locker sample that is slowed down: rumbling and random onsets turning into a steady pulse, then controlled placement of onsets, then briefly back into chaos. Other contrasts are found between the two timbres of the longer drones, and mostly consonant-like intervals between the drones in the beginning that become more dissonant by the end.

There are only four samples: forks, chopsticks, a squeaky locker, and a hum from a voice. 2015 |

Hardware Based Work

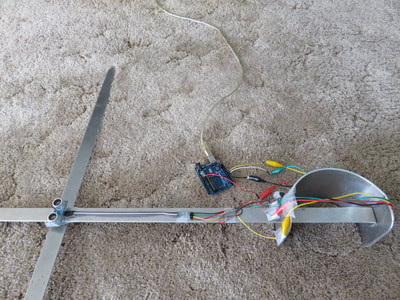

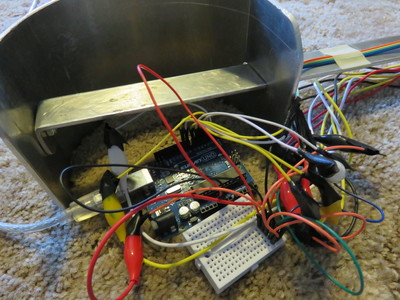

Ultrasonic Sword"Ironman Glove" |

The sword dance, or Ghillie Callum, is a traditional highland dance that utilizes either two swords, or a sword and a scabbard. According to the lore, if the dancer touches his sword, he will be injured in the upcoming battle. If he kicks it... let's just say he won't make it in time for dinner.

I placed an ultrasonic sensor in the middle of my practice sword to measure distance as my feet passed over it. I connected it to an Arduino Uno. For this demo, I didn't do anything too fancy. I just used the measurements to control the Party Lights jitter recipe, as well as the pitch shifting and harmonizer modules from the UBC toolbox. I didn't have any electrical tape available at the time, so I made do with scotch tape (it's a prototype). You may hear the jingle of one of my dog's collars in the background. For the second version I placed four ultrasonic sensors in the middle of each of the four swords (dance theory jargon). It didn't quite work as well as I hoped. At first 3 out of the four sensors was working perfectly (the pink one on sword 2 was weird). Then I *carefully* moved the entire setup backwards so I could take the video. That caused the sensor on sword 3 (purple) to act similarly to the pink one, and the sensor on sword one (green) to work so-so. The sensor on sword 4 (blue) stayed the same as before I moved the swords. It was almost too sensitive. I know the sword itself is conductive, and the wires are bunched together, so I taped all of the connections with electrical tape. I also tried adjusting the angle of the sensors, and it did not seem to fix the problem. I am unsure of the cause. If I want to do this in the future, I may just go back to one sensor, or possibly two. I tried again one more time without the sword, and the results seem somewhat improved. It makes me wonder if the sword was making contact with a few of the connections after all, but I'm not sure where. I was being really careful with covering the connections with electrical tape. Or maybe it had something to do with the angle. They should also be sensing much longer distances than they are in the fourth video too. I had made a textile pressure sensor with conductive fabric on either side and velostat sandwiched in the middle. To demonstrate, I had a bit of fun with sewing it onto a red glove and have it trigger an ironman glove sound sample, and Black Sabath. Since the glove is red, I threw in colour tracking to trigger the blast sound.

Expanded Version: as of March 1, 2018 my SUBCLASS group currently has been assigned to use RUBS, but the engineering students are not finished making my partners suit. So, I added more sensors to my glove so that she could at least mark out what she wants to do in rehearsals. It's a bit of a rough job, but it is not meant to be the "final version". Her suit should be ready soon! |

Software Based Work

SuperCollider controlling Max MSP /Jitter - DemoMusic Theory Teaching Tool |

This was a final project for an independent study course on learning the basics of SuperCollider. My goals were to have most parameters controlled by GUIs, use both synths and buffers, and send data to Max MSP via OSC messaging . The visuals done in Max had to be mostly syncretic with the sounds from SuperCollider.

2018 When I started teaching violin and theory online, I was faced with two problems: how to clearly show intervals and chords through a computer screen, and how to relate it back to my students instrument in a way that made sense? Writing on a piece of paper and then holding it up to the camera is not great because it is hard to see. By making this patch, I could show my screen and simultaneously show the notes on the keyboard, staff, and violin finger chart. In this pre-recorded video I use the patch to explain the fingerings for the D Major scale.

I had made this application with Max MSP. You may use it yourself if you download it from my Patreon. Patreon is a monthly subscription service. At the moment, this is the only application on my patreon, so I don't mind if you want to sign up for one month, download the application then cancel the subscription after the first payment: https://www.patreon.com/posts/violin-tool-32356346 |